AI is already embedded in healthcare workflows. The real question is whether it will reduce risk or quietly amplify it.

Last week, Ntracts executives joined healthcare leaders at the IPMI Healthcare GCI forum to examine a pressing question for our industry: will AI reduce risk in healthcare contracting, or will it amplify weaknesses that already exist in our systems? The perspective below reflects the core themes from that discussion.

Healthcare is at an inflection point. AI is no longer experimental. It’s influencing how organizations review contracts, interpret reimbursement terms and monitor performance across complex provider networks.

But healthcare isn’t a low-stakes environment.

Errors do not simply slow down a workflow. They can create compliance exposure, reimbursement leakage and patient access challenges. That reality demands a different level of discipline. The question isn’t whether AI is powerful. It’s whether it’s meaningful in environments where precision directly affects revenue integrity and regulatory accountability.

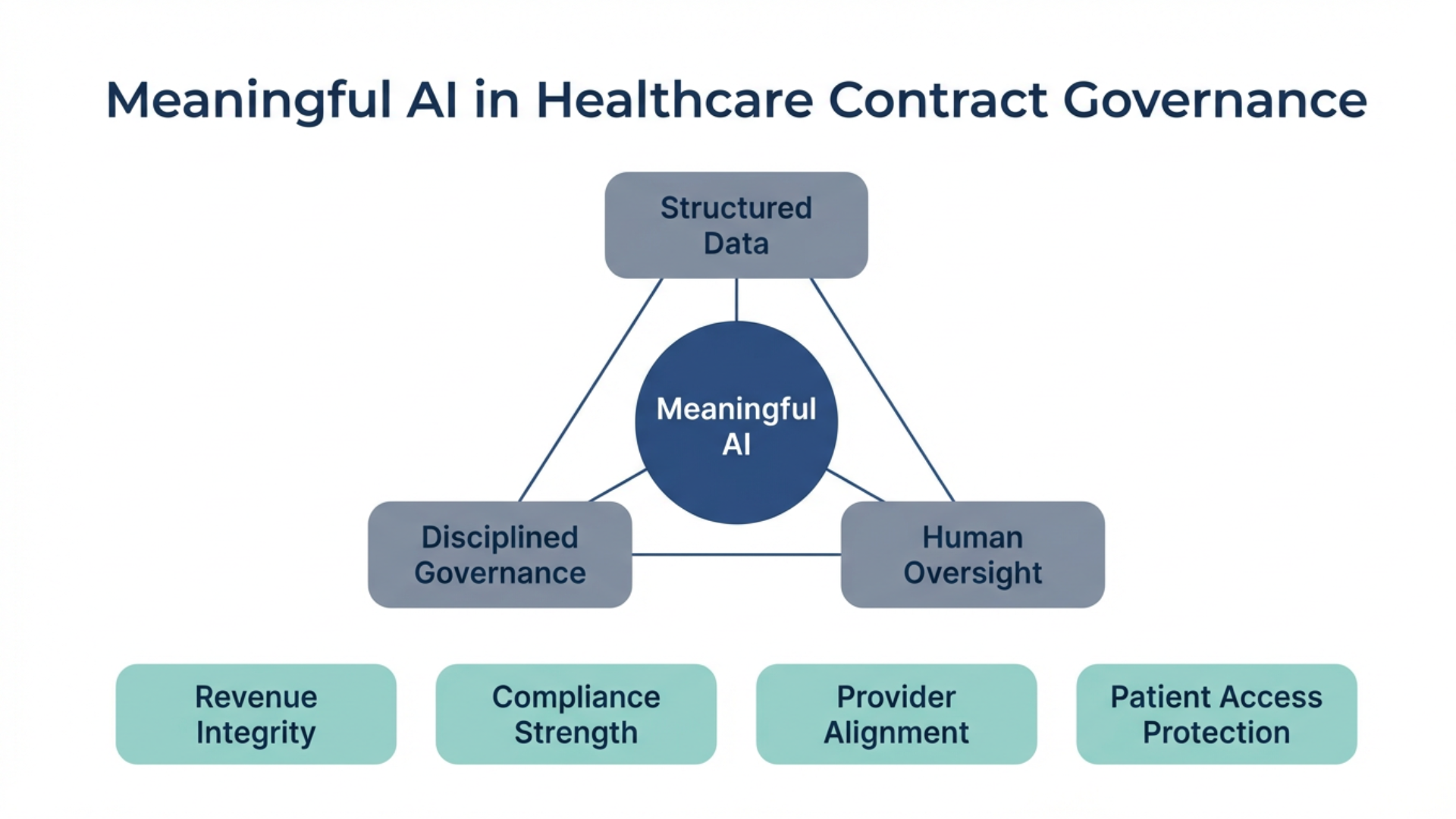

Meaningful AI in healthcare depends on three foundations: structured data, disciplined governance and human oversight. Without those elements in place, even advanced models can introduce risk into processes that require accuracy and traceability.

In healthcare contracting, language carries financial and regulatory weight. A reimbursement modifier, a carve out or a termination clause can materially change performance outcomes. The GPT-4 Technical Report documents measurable variability across reasoning tasks and evaluation settings, including differences influenced by prompt construction and task framing.¹ The Stanford AI Index further shows that leading large language models continue to exhibit nontrivial error rates on complex reasoning benchmarks.²

If phrasing and context alone can influence performance, consider the implications within a 75-page healthcare agreement. In that setting, precision isn’t an enhancement. It’s structural to financial stability and compliance.

There is also the issue of confidence versus correctness. Benchmarks such as TruthfulQA demonstrate that advanced language models can produce incorrect or misleading responses, particularly under adversarial or ambiguous conditions.³ While performance continues to improve, error rates remain material in uncontrolled environments.²

In healthcare contracting, that distinction matters. Would you allow a system with a meaningful error rate to interpret indemnification language, trigger an auto renewal or calculate downside risk exposure in a value-based agreement? In this environment, error isn’t theoretical. It has financial and compliance consequences.

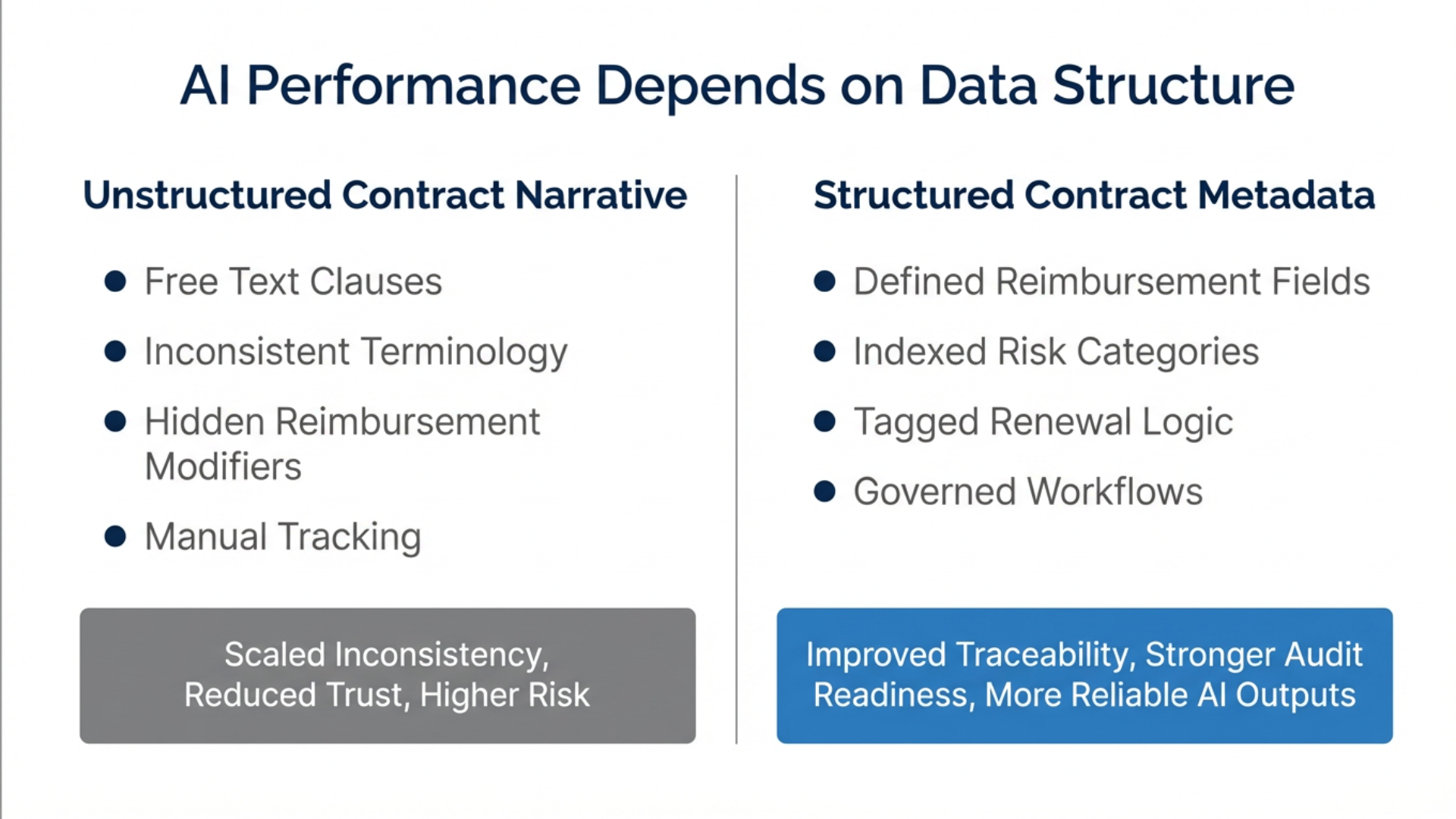

Many organizations are also exploring whether AI can be applied broadly across entire contract repositories to surface insight at scale. The answer depends heavily on data quality. Gartner has estimated that poor data quality costs organizations an average of $12.9 million per year.⁵ IBM research reports that many business leaders do not fully trust their organizational data and that a significant portion of AI project effort is spent preparing and structuring data before deployment.⁶ MIT Sloan Management Review has documented how data inconsistency and bias propagation can materially impact machine learning performance in enterprise settings.⁷

AI does not correct flawed data. It extends it.

If underlying contract information is inconsistent, incomplete or poorly structured, AI can scale that inconsistency across the enterprise. In value-based reimbursement environments, scaled inconsistency becomes scaled financial exposure.

Long-form healthcare agreements add another layer of complexity. Critical terms are often embedded in appendices, carve outs or escalation provisions. Research from Stanford on long context processing demonstrates that model accuracy can degrade when relevant information appears in the middle of extended documents.⁴ When AI summarizes an entire agreement at once, subtle but consequential provisions may receive less attention.

Segmented review and structured extraction improve reliability. AI can accelerate throughput and surface patterns. Experienced professionals remain essential to validate interpretation and apply contextual understanding of reimbursement models, compliance obligations and provider relationships.

Importantly, meaningful AI performance gains are often driven more by data architecture than by model size. McKinsey research has found that organizations with mature data governance and operating models are significantly more likely to achieve measurable AI impact.⁸ Structured, labeled datasets improve reliability and oversight.

In healthcare contract governance, structure means clearly defined reimbursement fields, indexed risk categories, tagged termination logic and controlled renewal tracking. When AI operates against structured metadata rather than free narrative text, hallucination risk decreases and traceability increases. In healthcare, traceability supports audit readiness, and audit readiness supports compliance.

Research also reinforces the value of human and AI collaboration. A 2023 NBER working paper found that AI assisted workers experienced productivity gains averaging 14% overall, with gains as high as 35% among less experienced workers.⁹ A Harvard Business School field study demonstrated that human and AI teams can outperform either humans alone or AI alone on knowledge intensive tasks.¹⁰

The pattern is clear. AI enhances capacity. Human oversight protects correctness.

There is an important distinction between free generation and grounded retrieval. Research on retrieval augmented generation shows that grounding responses in retrieved source material improves factual consistency and reduces hallucination risk.¹¹ Transparency strengthens trust. In healthcare contracting, transparency is an extension of governance.

Healthcare’s future does not depend on deploying AI everywhere. It depends on deploying AI intentionally, within structured environments where governance protects performance.

Meaningful AI in healthcare is structured. It’s segmented. It’s grounded in source data. And it’s validated by professionals who understand reimbursement, compliance and the operational realities of provider organizations.

When those elements align, revenue integrity improves. Compliance posture strengthens. Provider relationships are supported. Patient access is better protected.

AI is powerful. In healthcare, power must be paired with discipline. The organizations that lead will be those that align meaningful AI with structured data and strong contract governance.

References

OpenAI. GPT-4 Technical Report. 2023.

Stanford Institute for Human-Centered Artificial Intelligence. AI Index Report 2024.

Lin, S., Hilton, J., Evans, O. TruthfulQA: Measuring How Models Mimic Human Falsehoods. 2022.

Liu, N. F., et al. Lost in the Middle: How Language Models Use Long Contexts. 2023.

Gartner. How to Create a Data and Analytics Strategy. Estimate on cost of poor data quality.

IBM Institute for Business Value. The AI Ladder: Accelerating AI Adoption Through Data and Trust.

MIT Sloan Management Review. Research on data quality, bias propagation and machine learning performance.

McKinsey & Company. The State of AI Reports, 2022–2023.

Brynjolfsson, E., Li, D., Raymond, L. Generative AI at Work. NBER Working Paper. 2023.

Harvard Business School. Navigating the Jagged Technological Frontier. 2023.

Lewis, P., et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. 2020.